Ethernet Network¶

Having a single high-bandwidth and low-latency network as the local Fast IB interconnect network to support efficient HPC and Big data workloads would not provide the necessary flexibility brought by the Ethernet protocol. Especially applications that are not able to employ the native protocol foreseen for that network and thus forced to use an IP emulation layer will benefit from the flexibility of Ethernet-based networks.

An additional, Ethernet-based network offers the robustness and resiliency needed for management tasks inside the system in such cases

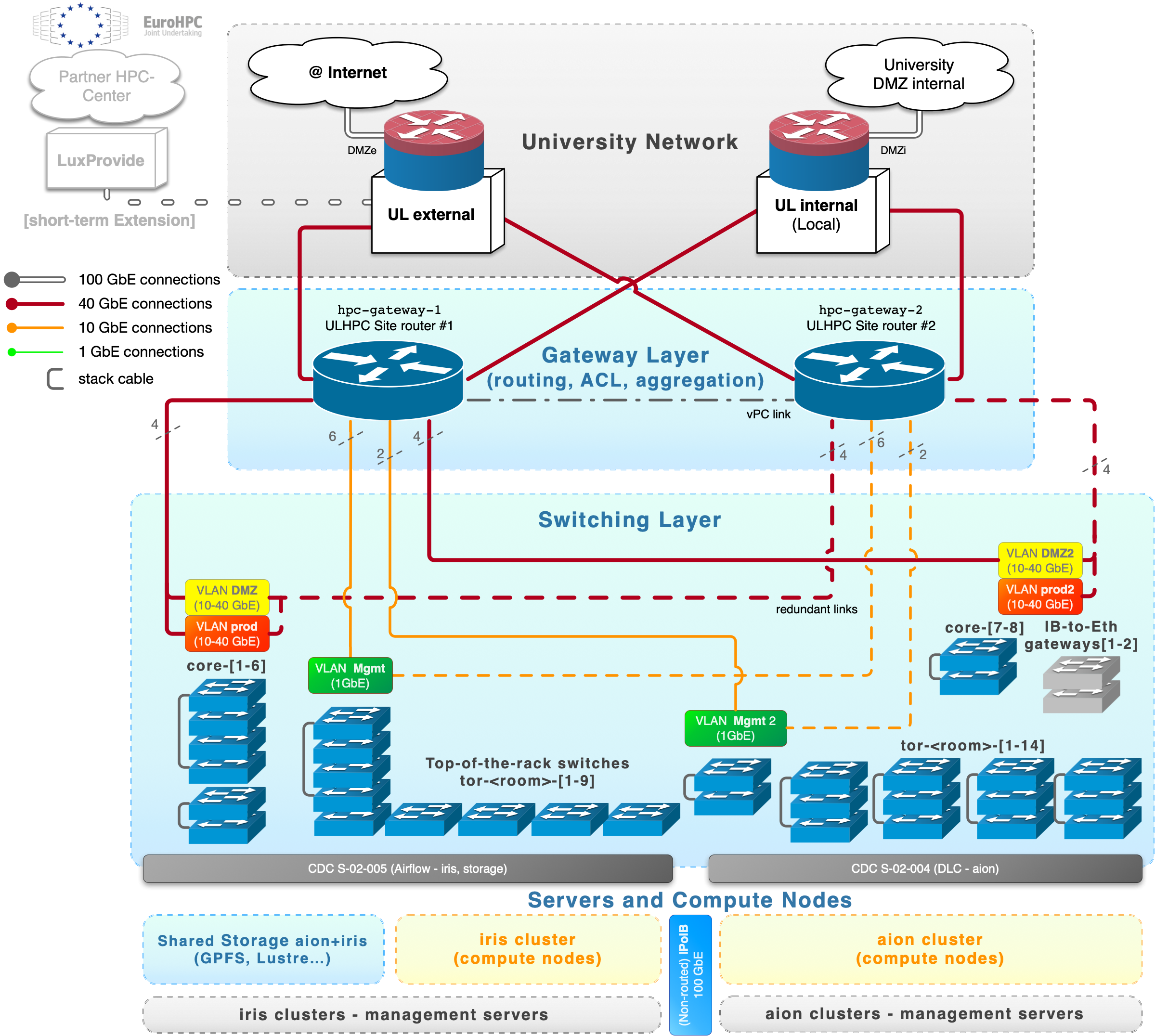

Outside the Fast IB interconnect network used inside the clusters, we maintain an Ethernet network organized as a 2-layer topology:

- one upper level (Gateway Layer) with routing, switching features, network isolation and filtering (ACL) rules and meant to interconnect only switches.

- This layer is handled by a redundant set of site routers (ULHPC gateway routers).

- it allows to interface the University network for both internal (LAN) and external (WAN) communications

- one bottom level (Switching Layer) composed by the [stacked] core switches as well as the TOR (Top-the-rack) switches, meant to interface the HPC servers and compute nodes.

An overview of this topology is provided in the below figure.

ACM PEARC'22 article

If you are interested to get more details on the implemented Ethernet network, you can refer to the following article published to the ACM PEARC'22 conference (Practice and Experience in Advanced Research Computing) in Boston, USA on July 13, 2022.

ACM Reference Format | ORBilu entry | OpenAccess | slides

Sebastien Varrette, Hyacinthe Cartiaux, Teddy Valette, and Abatcha Olloh. 2022. Aggregating and Consolidating two High Performant Network Topologies: The ULHPC Experience. In Practice and Experience in Advanced Research Computing (PEARC '22). Association for Computing Machinery, New York, NY, USA, Article 61, 1–6. https://doi.org/10.1145/3491418.3535159